Introduction

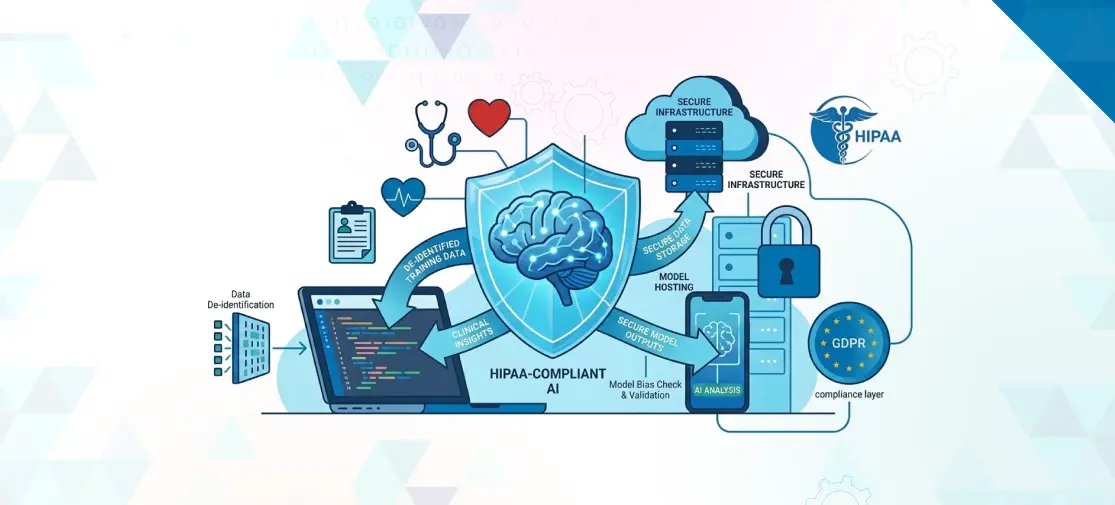

Healthcare teams are excited about AI for a good reason. It can speed up admin work, improve documentation, support decision-making, and create better patient experiences. But the moment AI touches patient data, the conversation changes. It is no longer just about innovation. It is about privacy, trust, safety, and legal responsibility.

That is why HIPAA-compliant AI development matters so much.

If your healthcare product uses AI to process, store, or analyze protected health information, you need more than a smart model. You need the right safeguards, the right development process, and the right partner. In simple terms, HIPAA is there to make sure patient information stays protected. And when AI enters the picture, that protection needs to be even stronger.

This guide breaks down HIPAA-compliant AI, what the law actually requires, the risks many teams miss, and how to build HIPAA-compliant AI systems in a practical, human-friendly way.

No AI Tool Is “HIPAA Certified”, Here’s What That Actually Means

One of the biggest misconceptions in the market is the phrase “HIPAA-certified AI.”

There is no official government label that makes an AI tool “HIPAA certified.” In fact, HHS says it does not certify any persons or products as “HIPAA compliant.”

So what does that mean for buyers and healthcare startups?

It means compliance is not a badge you buy. It is a process you build. A vendor, platform, or AI product may support HIPAA compliance, but the real question is this: how is the system designed, deployed, accessed, monitored, and governed when it handles protected health information? That is the heart of HIPAA-compliant AI software development.

In other words, a model is not compliant by itself. The full environment around it must be compliant.

What HIPAA Actually Requires When AI Touches Patient Data

HIPAA is not an “AI law.” It is a privacy and security framework that applies whenever covered entities and business associates handle protected health information. HHS explains that the Security Rule requires administrative, physical, and technical safeguards to protect electronic protected health information, and that business associates are directly responsible for key Security Rule obligations too.

For AI projects, that usually means a few big things:

First, only the right people should have access to patient data. HIPAA’s “minimum necessary” standard says organizations must make reasonable efforts to use, disclose, and request only the minimum PHI needed for the purpose.

Second, if you work with a cloud vendor, AI platform provider, or development company that handles PHI on your behalf, you likely need a Business Associate Agreement. HHS says covered entities must include protections in business associate contracts when contractors perform services involving PHI.

Third, your AI workflow must protect confidentiality, integrity, and availability. In simple terms: patient data must stay private, accurate, and accessible to authorized users when needed.

That is why HIPAA AI regulations are not just about where data is stored. They also affect access controls, logging, encryption, contracts, risk analysis, incident response, and testing.

The 2025 HIPAA Security Rule Updates You Can’t Ignore

This is where many blogs get sloppy, so let’s be precise.

In late December 2024, HHS OCR issued a Notice of Proposed Rulemaking to strengthen the HIPAA Security Rule. The proposal was published in the Federal Register in January 2025. HHS also makes clear that while this rulemaking is underway, the current Security Rule remains in effect. So these are major proposed changes, not a final rule you can treat as already enacted.

Still, they matter a lot because they show where enforcement and expectations are heading.

The proposal includes more specific requirements around written technology asset inventories and network maps, stronger written risk analysis, annual compliance audits, encryption of ePHI at rest and in transit with limited exceptions, multi-factor authentication, vulnerability scanning at least every six months, penetration testing at least annually, network segmentation, backup and recovery controls, and written procedures to restore certain systems and data within 72 hours.

For anyone building HIPAA-compliant AI development programs, this is a big signal. Regulators want clearer documentation, stronger technical controls, and faster recovery planning. That is especially relevant for AI products that rely on cloud pipelines, model APIs, multiple vendors, and frequent updates.

AI-Specific Risks That Traditional HIPAA Guidance Doesn’t Cover

HIPAA gives the legal foundation, but AI introduces extra risk that traditional software checklists do not fully cover.

Model Training on PHI

This is one of the biggest issues in HIPAA-compliant LLMs and healthcare AI.

NIST notes that generative AI training can involve personal data and can create privacy risks related to transparency, consent, and purpose specification. NIST also warns that models may leak or infer sensitive information, including through data memorization.

In simple language: if patient data goes into model training without careful controls, the risk does not end when training ends. The model itself may retain patterns or details you never meant to expose.

That is why many healthcare teams should be very cautious about training foundation models directly on PHI unless the legal, technical, and governance controls are extremely strong.

The Black Box Problem

Health IT guidance has long warned that some AI systems act like “black boxes,” meaning users cannot clearly see how the system reached its output. ONC has described opaque algorithms as systems where it is impossible to tell exactly how inputs were combined or weighted to produce a recommendation. HHS has also noted that many AI applications using health data are developed without clear information about the algorithms and data involved.

That matters in healthcare because trust is everything. If a clinician cannot understand why a model flagged a patient, confidence drops. If a patient cannot understand how an AI-driven decision affected care, concern goes up.

Continuous Learning Systems

Traditional software often changes on a schedule. AI systems can change through retraining, prompt updates, tuning, vendor model changes, and shifting real-world data.

NIST’s AI RMF emphasizes that AI risk management should happen across the lifecycle and that testing, evaluation, validation, and verification should be applied iteratively, not just once before launch.

So a system that was safe last quarter may not be safe now. That is why production monitoring is so important in HIPAA-compliant AI systems.

Common Mistakes Healthcare Development Teams Make

A lot of teams do not fail because they ignore HIPAA. They fail because they oversimplify it.

Common mistakes include using general-purpose AI tools with no clear data boundaries, skipping a proper Business Associate Agreement, sending PHI into tools that may reuse inputs, assuming encryption alone equals compliance, failing to apply the minimum necessary rule, and treating security reviews as a one-time event instead of an ongoing discipline. HHS guidance on cloud use, business associates, de-identification, and the Security Rule all point back to the same truth: compliance depends on how the full system is governed and operated.

Another major mistake is forgetting the human side. In healthcare, even small failures can damage trust fast. A confusing model output, a hidden vendor dependency, or weak access controls can become a patient confidence issue as much as a compliance issue.

A Practical Development Checklist for HIPAA-Compliant AI

Here is a simple framework for HIPAA-compliant AI software development.

Phase 1: Foundation

Start by identifying whether PHI is involved at all. Map where data comes from, where it moves, who touches it, and which vendors are involved. Put Business Associate Agreements in place where needed. Create a written risk analysis and decide early whether the project should use PHI, a limited data set, or properly de-identified data under HIPAA’s recognized methods, such as Safe Harbor or Expert Determination.

Need a healthcare AI product that is secure from day one?

Talk to Techvoot about building HIPAA-ready AI that patients and providers can trust.

Phase 2: Development

Use least-privilege access, encryption, secure environments, audit logs, and strong vendor controls. Keep production data out of casual testing. Be careful with prompts, logs, temporary files, analytics tools, and third-party APIs. If you are building with HIPAA-compliant LLMs, define what data can enter the model, what cannot, and whether any input is retained.

Phase 3: Testing & Validation

Do not test only for accuracy. Test for privacy leakage, hallucinations, unsafe outputs, bias, logging behavior, and access control gaps. NIST recommends robust testing, evaluation, validation, and verification across the lifecycle.

Phase 4: Deployment & Monitoring

Once live, monitor continuously. Watch for drift, policy violations, unusual access, vendor changes, and security events. Build response plans for downtime and incidents. HHS’s proposed Security Rule updates also point toward stronger recovery planning, annual testing, and recurring reviews.

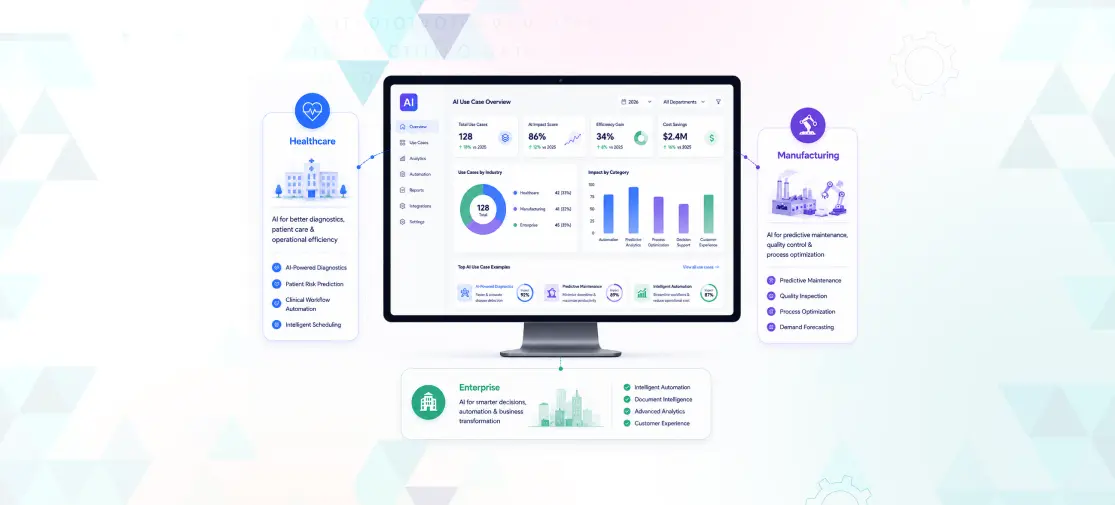

Why Techvoot for HIPAA-Compliant AI Development

Healthcare organizations do not just need code. They need confidence.

Techvoot helps turn AI ideas into practical, secure products built for real-world healthcare use. That means clear architecture, careful handling of PHI, strong development discipline, and a focus on outcomes people can understand. Whether you are exploring clinical workflows, patient engagement tools, documentation support, or smarter automation, the goal is the same: build useful AI without putting trust at risk.

If your audience is already exploring AI in Healthcare or NLP in Healthcare, this blog naturally supports those journeys. And if they are ready to build, it should point them toward your AI Software Development landing page as the next step.

Conclusion

HIPAA-compliant AI development is not about chasing a label. It is about building systems the right way.

The safest healthcare AI products are not the ones with the loudest marketing claims. They are the ones built with careful planning, data discipline, strong safeguards, and ongoing oversight. HIPAA sets the floor. AI raises the stakes.

If your organization wants to use AI in healthcare, now is the time to do it responsibly. Build for privacy. Build for clarity. Build for people first.

Planning to launch a healthcare AI product this year?

Let Techvoot help you build a HIPAA-conscious solution that drives growth without cutting corners.